Mark.ly is an AI-powered marking assistant that helps teachers mark faster with higher consistencies, and provides data-driven insights

Mark.ly is an AI-powered marking assistant that helps teachers mark faster with higher consistencies, and provides data-driven insights.

Teachers in Singapore dedicate a significant portion of their time to marking, yet many face challenges with grading consistency. This manual and time-intensive process not only adds to their workload but also delays crucial feedback that could drive student progress. As workloads continue to grow, the traditional approach to assessment is becoming increasingly unsustainable - underscoring the urgent need for solutions that streamline grading while enhancing the quality of feedback.

How might we design an AI-assisted grading system that lightens teachers' workload by automating routine grading tasks, improving consistency, and delivering meaningful, accurate feedback to students?

Teachers in Singapore often find themselves working long hours, not just during school but well into the evenings and weekends, marking assignments and preparing lesson plans. have shown that teachers work about 53 hours a week, leading to high levels of stress and burnout.

The heavy workload on marking homework and exams, combined with administrative responsibilities, significantly impacts teachers' work-life balance and leads to teachers' burnout. Elisha Tushara, a former teacher, had written in The Straits Times "" about her struggles during her teaching career regarding balancing her heavy workload with personal life.

To better understand the problem space, we conducted both qualitative and quantitative user research with MOE teachers to find out their existing challenges and how we are able to develop a product to better fit their needs.

Over the course of 2 weeks, we did user interviews with 6 MOE teachers and sent out a research survey with 71 respondents.

🎯 Goal: To better understand their processes on marking and the challenges they faced, as well as any experience with using alternative applications to aid in marking.

✏️ Methodology: Face-to-face/ zoom interviews

💡 Key Insights:

Exam

Teachers first mark individually, then convene with 3-5 sample scripts (good, average, bad) for calibration and discussion. Major exams take 2-3 days; minor exams, 2-3 days after school.

Homework

Marked daily after class (from 1pm). A single class's worksheets take 2-3 hours, making manual marking overwhelming. With multiple classes and other commitments (CCA, admin), timely marking is challenging. Some teachers also provide feedback, adding to the workload.

Challenges

🎯 Goal: To collect quantitative data on their sentiments over existing marking processes, challenges faced and receptiveness towards using AI for marking.

✏️ Methodology: survey sent out to MOE teachers

💡 Key Insights:

Our approach follows an iterative process of user research, development and user testing to ensure Mark.ly meets the needs of educators and students effectively.

🚀 Phase 1: User Research

🚀 Phase 2: Development

🚀 Phase 3: Usability Testing & Refinement

We propose developing a localised AI-driven marking dashboard in-line with MOE's curriculum. It will provide an end-to-end solution from marking assignments to providing accurate feedback, so that it can help in reducing teachers' time spent on manual marking and allowing for more consistency.

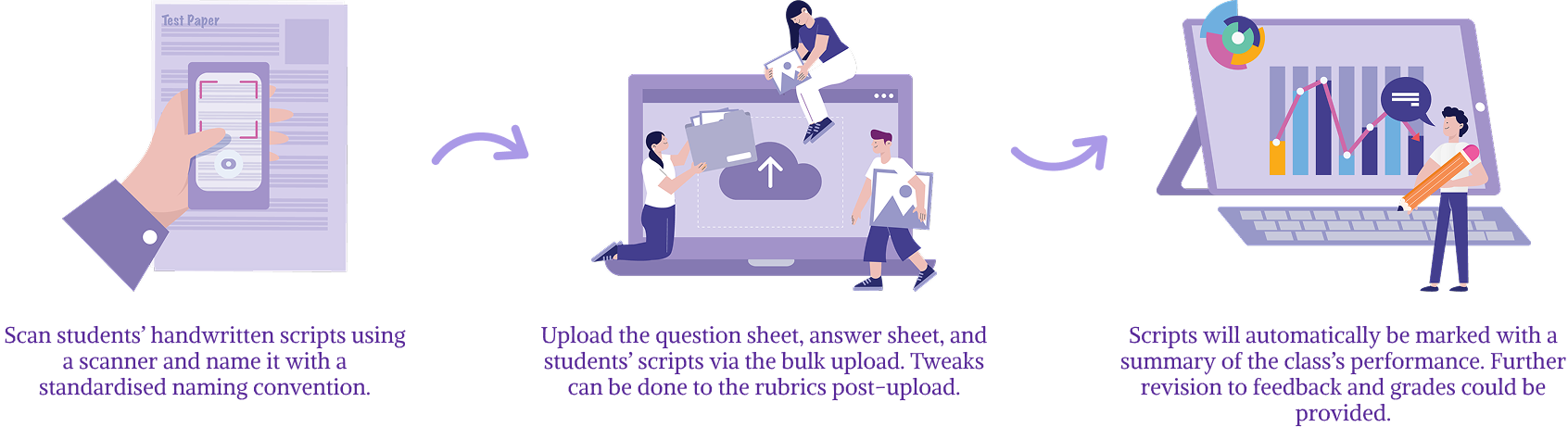

Mark.ly automates the marking process by:

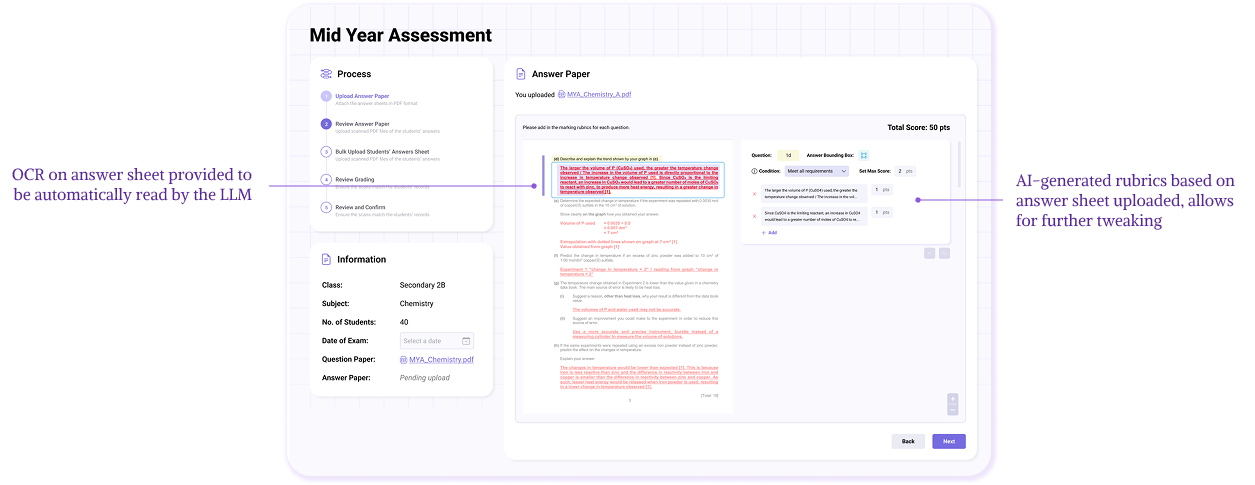

a) Uploading of assignment's question and answer sheet (Rubrics)

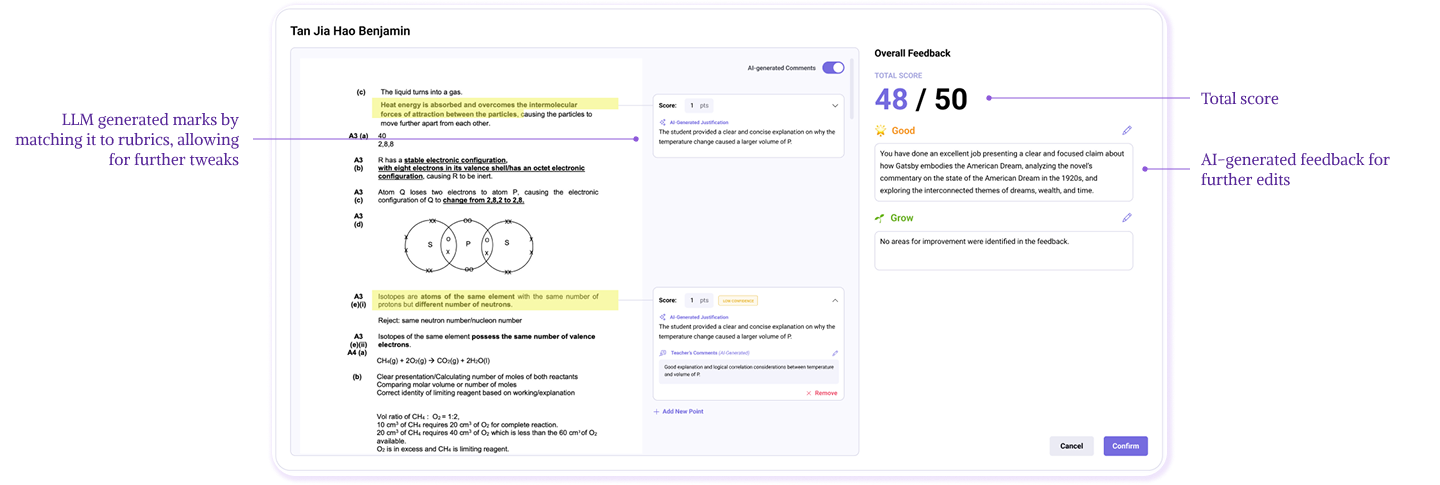

Allows for AI-extracted rubrics to ensure consistencies across the subject's grading system. Rubrics extracted could be fed into LLM to generate capabilities in-line with MOE's curriculum.

b) Rubrics tweaking

Allows for flexible rubrics tweaking according to changing circumstances, and updated rubrics could be fed into the LLM to be applied to all available/future gradings.

c) Bulk upload of scanned students' answer sheets

Convert the PDFs into OCR for easier grading via AI, grades will be automatically sorted into the individual students based on a consistent naming convention of the PDFs.

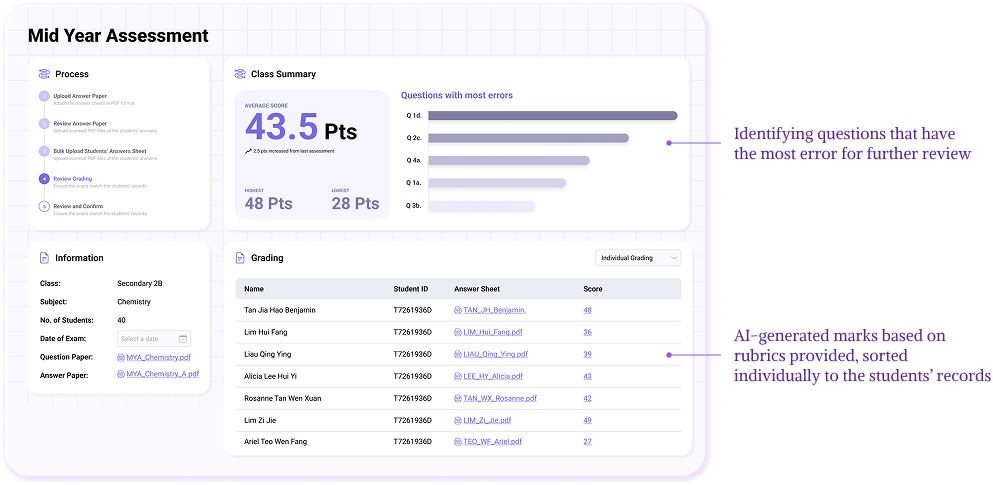

d) Overview summary of class's performance

Provides basic statistics for the class's performance, with insights into questions that were performed the worst so that the teachers could better understand what were the common issues faced by students.

e) AI-generated feedback and detailed grading

Reduces teacher's workload through providing a 1st-cut feedback and grading for the teacher's review thereafter. Allows for tweaking of grades and feeding updated rubrics back into LLM. Provides automated and timely feedback for each assignment to the students.

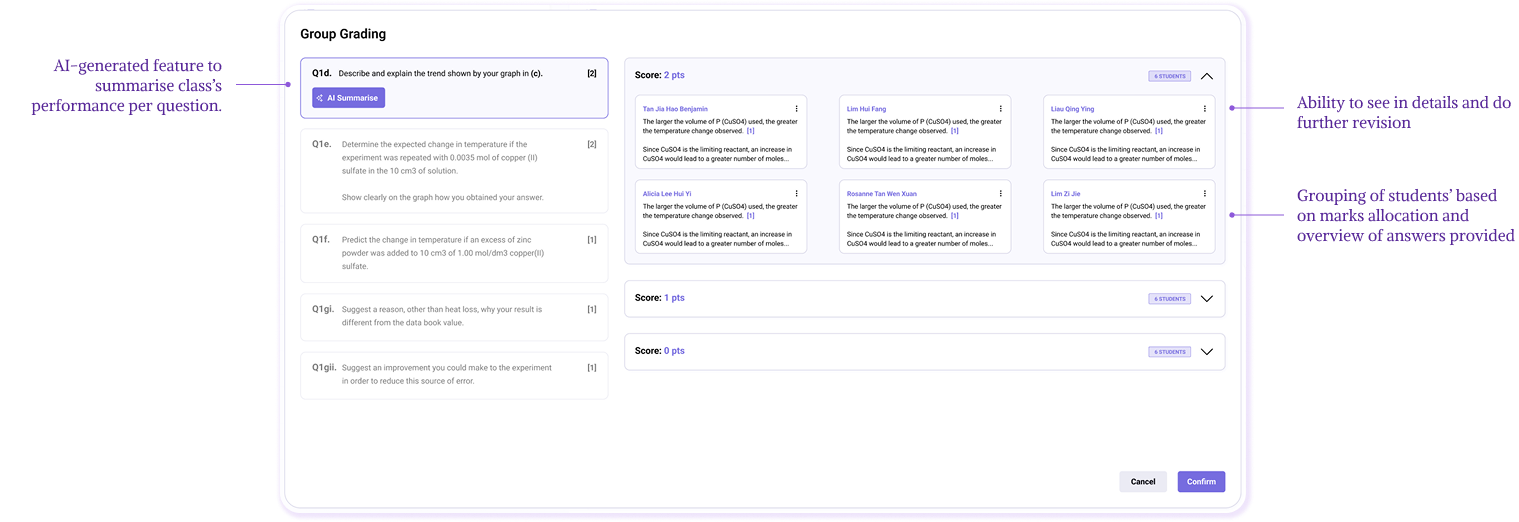

f) Group grading (in future)

Grouping students of similar grades (individual question granularity) so that teachers have a clear overview of what type of answers were written by the students. This also helps to reduce time spent on review by allowing teachers to focus on groups that did not get the full marks.

g) Feedback to students and data analysis (in future)

Allow for the grades and feedback to be available to students, online storing of past assignments and generating trend analysis per student based on history.

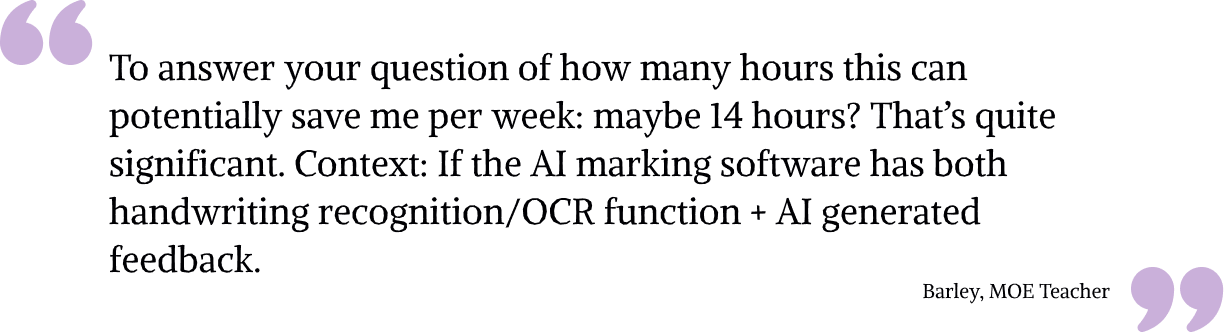

We tested Mark.ly with a few MOE teachers using their school examination questions and students' scripts. These teachers came from various schools such as Dunearn Secondary, Rosyth and ACS (Junior). The usability test is mainly seeking teachers' receptiveness to Mark.ly, as well as providing valuable feedback on how we can improve on our product to better fit their needs.

Generally, teachers have positive reception towards the concept and development of Mark.ly. They provided valuable insights on how we can potentially improve on our product features, such as a feedback loop to students, locally-stored MOE curriculum/rubrics and student-based data bank for trend analysis.

This results in approximately 5 minutes spent (not inclusive of time spent to review) to provide grades and feedback for 1 class of 40 students, at least a 50% decrease in marking time spent for teachers.

Beyond Hackathon, we will work closely with MOE on refining our LLM quality and driving the business needs for this product. We will also work closely with more teachers to better improve on our product to suit their needs.

We will identify pilot schools (with specific subjects) to test out Mark.ly, and in the longer term we will aim to expand to a series of subjects and roll out to a wider range of schools.

Key areas of focus include:

Chunqi, Shirlynn, Yi Ning, Jack, Ahmad